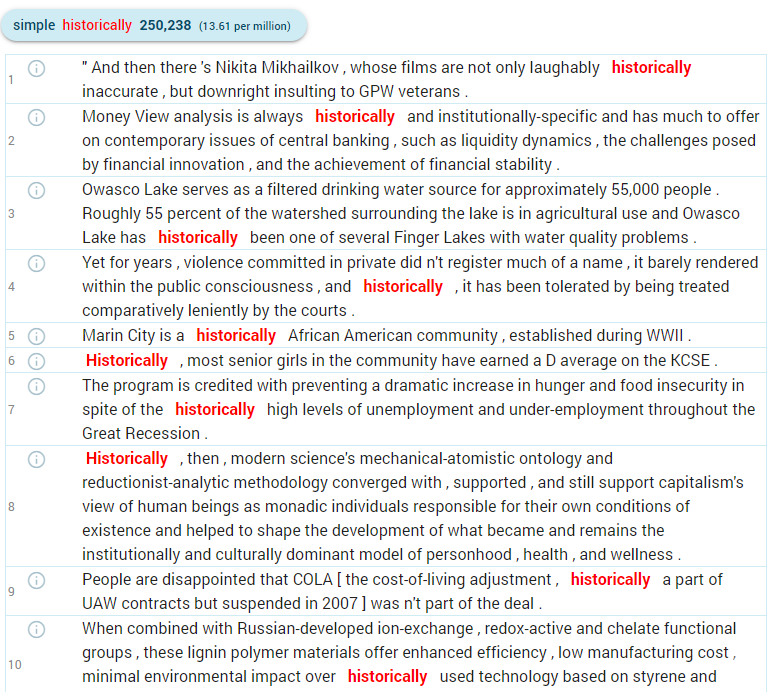

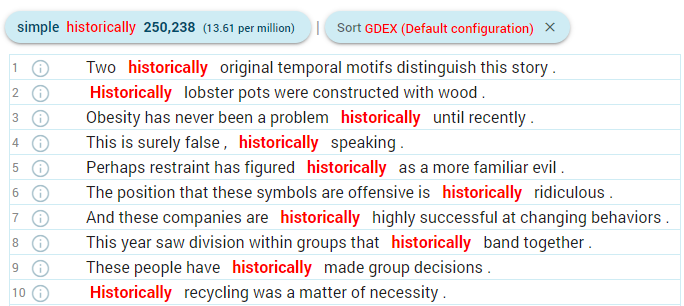

GDEX configuration introduction

GDEX configuration files are written in YAML (Wikipedia.org) which is a human-readable (and human-editable) format also suitable for effective machine processing. The actual formula for calculating sentence scores is an expression in the Python programming language. Its syntax is limited to basic mathematical and logical operations, as well as function calls to pre-defined GDEX classifiers. Several variables such as the values of positional attributes are available. Named variables defined in the configuration file, typically regular expressions, can also be referenced in the formula.

The file contains two top-level keys – the mandatory formula and optional variables. Unless the formula fits on a single line, it must be preceded by the > YAML symbol for multi-line values. YAML does not allow the tab character, so you must use spaces for indentation. This is what a simple configuration file may look like:

formula: >

(50 * is_whole_sentence() * blacklist(words, illegal_chars) * blacklist(lemmas, parsnips)

+ 50 * optimal_interval(length, 10, 14)

* greylist(words, rare_chars, 0.1)

* greylist(tags, pronouns, 0.1)

) / 100

variables:

illegal_chars: ([<|][>/\^@])

rare_chars: ([A-Z0-9'.,!?)(;:-])

pronouns: PRON.*

parsnips: ^(tory|whisky|cowgirl|meth|commie|bacon)$

The formula must produce a number between 0 (worst) and 1 (best). Values outside this range will be changed to its nearest limit.

Apart from the variables (actually constants) defined in the configuration, these important ones are available:

- length – sentence length (number of tokens including punctuation)

- kw_start and kw_end – a position of the keyword (range: 0–length)

- words, tags, lemmas, lemposs, lemma_lcs – a list of positional attributes (attribute name + “s”)

- illegal_chars – characters which must not appear in the sentence at all (sentences containing them will be penalized)

- rare_chars – characters should be avoided but not as strictly as illegal_chars

- pronouns – substituent, in this case, matches all tokens with the PoS tag PRON

- parsnips – a list of words from taboo topics, sentences with these words are penalized

This is a general example of how variables in the GDEX configuration can work and can be named. You can name them differently or add more variables for checking further sentence features.

The attribute lists can be used as a whole (for example as a parameter to a classifier) or you can even access individual tokens using standard Python syntax. For example, words[0] is the first word in the sentence and tags[-1] is the tag of the last token.

Classifiers

blacklist

blacklist(tokens, pattern) returns either 1 if none of the tokens (e.g. words, lemmas etc.) matched pattern (regular expression), 0 otherwise

greylist

greylist(tokens, pattern, penalty) is similar to blacklist, but you can specify a penalty that will be subtracted from 1 for each token matching pattern down to 0. With a penalty of 1, it behaves as a blacklist.

optimal_interval

optimal_interval(value, low, high) returns 1 if value is between low and high. Outside this range, the score linearly rises from 0 at low/2 to 1 at low and falls from 1 at high to 0 at 2*high. For value lower than low/2 or higher than 2*high, the score is 0. Usually used with length.

is_whole_sentence

is_whole_sentence() (mind the parentheses) returns 1 if the sentence starts with a capitalized word and ends with a full stop, question mark or exclamation mark. Otherwise, it returns 0.

word_frequency

word_frequency(word) returns the absolute frequency of the given word in the corpus. word_frequency(word, normalize) returns the relative frequency per normalize tokens. For example: word_frequency(words[1], 1000)

keyword_position

keyword_position() returns a number between 0 and 1, starting at zero for a keyword at the beginning of the sentence, and rising in equal increments (depending on the length of the sentence) to 1 for a keyword at the end of the sentence.

keyword_repetition

keyword_repetition() returns the number of occurrences of the keyword in the sentence.